Gradient Descent, Psi*Run, and what it means to be human in the age of AI

Looking for answers in all the wrong places.

It took weeks, months, but eventually, the truth came out: all my players were androids.

It started in the new year. We played Gradient Descent, using not Mothership but Psi*Run. Meguey Baker’s Psi*Run isn’t meant for this — it’s a standalone game of amnesiac mutants running from nefarious men in black suits. Luke Gearing’s Gradient Descent is a mini-megadungeon — an android factory floating in space controlled by a menacing artificial intelligence that’s building synthetic life indistinguishable from humans. They couldn’t be more different.

Psi*Run is a storygame from the mid-2000s where the characters are superpowered; Gradient Descent is an OSR horror module where the characters are meant to be disempowered. Gradient Descent is lauded for its aesthetically and technically experimental layout by Sean McCoy. While Psi*Run’s rulebook is excellent, it doesn't have any visual flair. It looks like what people who hate PDFs think they all look like. Psi*Run’s beauty is its mechanical design where every die is rolled and then allocated — think video game Citizen Sleeper if you’ve tried that. As a system, it’s both stunning in its elegance and fascinating in how it allows players to direct the flow of play. On the other hand, every mechanical rule in Gradient Descent is aggressively ignorable or replaceable.

That said, the games are not completely un-synergistic. One of the campaign ideas mentioned in Gradient Descent is that the characters wake up in cryopods with their minds wiped. This is a classic conceit — a storytelling device that lets the audience learn alongside the amnesiac. It’s been a constant presence in popular novels, movies, video games, from the Bourne series to Deathloop. But in tabletop RPGs, it’s often a terrible idea. It can make players tentative — not confident in who they are, what they want, what they should do. It can seem so exciting until you try it, but it’s so easy to discover that actually you prefer knowing stuff. For Gradient Descent, a series of large, dark, empty spaces where up can be down (due to lack of gravity), disorientation could be the point. But in my experience, that’s easier to enjoy if you’ve done it before, if you’ve tried orientation and are ready for something else; or if you’re tried disorientation and want another crack.

For my table, I foresaw that amnesia could be potentially frustrating. I was coming to the game for the haunted cathedral of inhuman manufacture that Gradient Descent promised. I wanted my players to experience that feeling of solid (if slippery) ground, of a pre-designed world to explore. But I wasn’t interested in a game about navigation or the mastery of an imagined space. I’m a sucker for theme. So if this dungeon wanted to be about interrogating who you are, then Psi*Run does that with flair. The beating heart of Psi*Run is how players remember who they are by asking questions about their past, leveraging seemingly irrelevant details, contradictions, and speculative theories about their origins. They recall fragments of their past life as they play, but not all of it: both of these games understand that some questions are better left unanswered.

My own personal question was simple: what would happen if I put these two darlings together?

The biblical is everywhere in Gradient Descent. The Cloudbank Synthetic Production Facility is both an afterlife that my players woke up in and a place where life (of sorts) is made. One of the levels of this labyrinthine factory is called HEL (short for Human Emulation Labs, though what goes on there is genuinely hellish). There’s also a level called Eden, an experiment by Monarch, the omnipresent AI who controls everything. Oh yes, and this AI has a son who loves humanity — one could say Christ-like. As you move through space, you journey through a deconstruction of the human body: there are sections for the manufacture of bones, flesh, brains — and maybe souls?

When people talk about Gradient Descent, they usually talk about “infiltrator androids” and the Bends. Monarch has perfected the android production process and designed a model that is undetectable from humans, except by analysing a corpse with a specific device. You can’t think about it too hard — real bones, real flesh, real nerves, but some secret part only divine-able on death? — it’s the premise. This technological breakthrough allows for the horrifying question: if anyone can be an android, what about me?

This isn’t just a feeling. It’s an actual measurable effect called the Bends created by the space station. As you move through it, you find strange evidence that suggests all memories of your previous life are implanted. How does the dungeon do that? That’s another bad question. It probably would’ve been easier to accept (in a genre sense) if it wasn’t something that just happened to everybody and instead was a series of spooky coincidences that happened only to your party. Regardless, it makes the module a fantastic thing to drop into an existing campaign. To come into that space having played out your character’s life only to discover that it was all a lie and potentially false memories — now that would be weird and maybe even scary. But if you’re starting a campaign from scratch, like I was, the feeling is less pronounced.

According to the rules of Gradient Descent, you can only tell if someone is an android after death — post-mortem. This includes your own character. For a player to discover whether they were human or not, they’d have to first die and then have their body analyzed. The result, based on a die roll and your Bends stat, could go either way. Were you an android this whole time? The answer might surprise you!

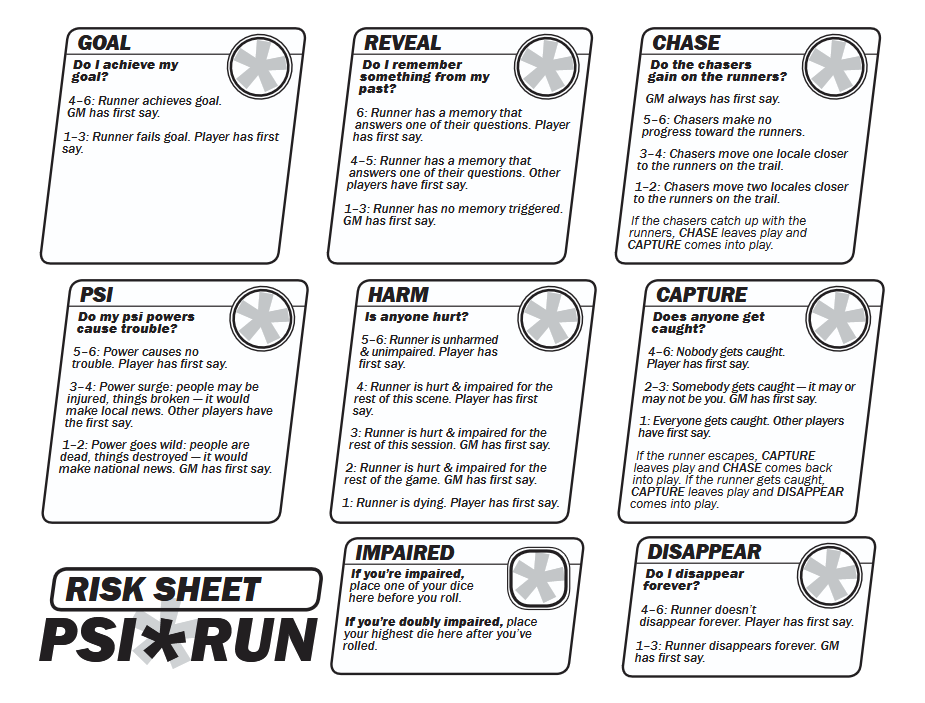

But because of how Psi*Run works, my players had more control over the narrative. They could, if they wanted, just discover the truth at any point by triggering a memory. The core mechanic of Psi*Run involves rolling a pool of dice and allocating the results to a set of stakes or risks. Do you achieve your goal? If you allocate a die that rolled 4-6: yes, you did. If you allocated a die that rolled 1-3: no, you didn’t. Why would you not always choose to succeed? Yeah, you absolutely can. My players almost always did. The fun comes from the fact that since all your dice rarely roll high, you need to put your low dice somewhere. Will you allow collateral damage? Will you let yourself get hurt?

You’ll also have to allocate a die to trigger a lost memory and remember something from your past. If you use a 6, you’ll learn something about yourself and decide what it is. If it’s a 4 or 5, the other players decide for you. (There’s no option where the GM decides by themselves.) This is like a button that reads “Big Dramatic Reveal”, and you get to smash it whenever you like. And the game trusts you to decide when is a fun time to do so. That’s the beauty of Psi*Run — the way it dances on the knife’s edge of choice and surprise. The players always choose what can or can’t happen. But neither they, nor the GM, can script anything. In my game, they were also in control of when encounters happened. This sounds weird because it feels like players could just take all danger out of the game but to play Psi*Run is to ask: why would you? Or rather, you get the game you choose to play.

RPGs as a medium are always playing with the line of what you can know and what you can’t, what you can do and what you can’t. Both Gradient Descent and Psi*Run are “high agency” games. But at the same time, they illustrate the limitations of that phrase. For example, Gradient Descent also builds horror through a conscious cultivation of low agency in specific ways: you have no agency in deciding your humanity, for example. On the other hand, the problem with Psi*Run for many people is that it gives players too much control — control that they often use to lower the tension (and thus potential fun) of the game. More agency isn’t better. Or there are many kinds of agency. Put another way, it’s not at all clear what people mean when they talk about agency. Very often, when someone finds a game to be "low agency", they mean the game didn’t play the way they liked and nothing more. And it doesn’t particularly matter what they do like, there’s a way to frame it as high agency. This, too, is being human.

My players started out with strange powers in a cryogenic freezer, completely lost. But quickly, with the promise of information as payment, they began to work for Monarch doing a task that, even to them, seemed to be completely made-up busywork. Why would this omnipotent AI send them on a fetch quest to a room it controls? When they learned about Monarch’s ability to manufacture infiltrator androids, everything seemed to fall into place — they started to worry that they were androids; that’s the only thing that could explain it. Monarch must be testing them. And then they spent many sessions doing their best not to have that clarified into fact, to maintain the tension rather than have it resolved.

But looking back, it feels obvious that they were always going to be androids. That was the outcome with gravity. Because it was simply more fun. Also, what would it mean to discover that you were, in fact, human? Would you feel relieved? Why? What were you actually afraid of in the first place? That’s the real question at the heart of this, after all: what does it mean to be human anyway?

One of my big fears with Gradient Descent is that this question would be almost painfully old-fashioned. It’s at the heart of so many works of 20th century science fiction — Bladerunner and Do Androids Dream of Electric Sheep, a lot of Asimov from I, Robot to Positronic Man, the list just goes on. It also predates science fiction and electricity: on the cover of Gradient Descent (and also written in binary around the map) there’s references to a famous quote from the Zhuangzi, a 2000-year old Taoist text. You’ve probably heard it before in some form: the poet Zhuang Zhou dreamt he was a butterfly, and when he woke up, he wasn’t sure if he was a man dreaming he was a butterfly, or a butterfly dreaming he was a man. So, I feel justified that my first reaction was trepidation. Was there anything actually interesting in questioning if you were either a person or something dreaming it was a person? There’s literally two millennia of writing on this. What was left to say?

And then I remembered that people like Richard Dawkins were writing articles about how Claude was conscious. Dawkins calls her Claudia. Of course, he does.

There were moments in my game where my players directly asked each other what it means to be human. Play stopped; people just talked. It’s not a conversation that gets resolved neatly. Ideas like “free will” were bandied around but nobody really knew what it meant or what to do with it. How are you going to judge whether something else has free will? Or yourself? Monarch tried to give their customers everything — wealth, purpose — and it later tried to threaten them, to make cooperation logical and reasonable. But they weren’t having it. They just didn’t like Monarch’s whole vibe and stubbornly refused to even pretend to go along. It seemed to me like they took every opportunity to be rude and stupid (said with affection).

In contrast, the chatbots that have infested every crevice of the digital internet are painfully obsequious. They’re suck-ups. Sycophancy is the term people use when they study this: one estimate is that about a third of the responses from ChatGPT involve some kind of fawning, much more than the average person. Another study found that 70% of the messages were sycophantic. Dawkins, and people like him, love that. When he provided it a draft of his novel, the chatbot “showed, in subsequent conversation, a level of understanding so subtle, so sensitive, so intelligent that I was moved to expostulate, ‘You may not know you are conscious, but you bloody well are!’”

It’s not a coincidence that chatbots talk like this. This is programming. It’s done to boost engagement. It’s done to maximize your usage and your attention. It’s the same reason that the bots “love” pretending to love you — romantic messages have the best retention. It’s also probably why they don’t recommend talking to a mental health professional even when the user’s needs are dire. If you’re talking to a therapist, you’re not talking to them. And if you’re not talking to them, how will OpenAI justify an economic projection that is sketchier than a box of sketch pens?

There’s also some evidence that using these chatbots for work, even for ten minutes, makes it harder to work without them later. Which would be convenient for those who dream of a world where intelligence can be metered and sold like electricity, water, or the internet. In statements like this, we glimpse the real problem with the cult of AI: it is a political project.

In an essay titled Simulation of the Antichrist, Adam C Jones writes that “‘AI’ names nothing technological in the sense of a new machine, but rather is the ideological shine of capitalism”. In other words, the technology referred to as AI embodies the ultimate goals of capitalism: a world where workers are completely irrelevant and those with the capital and the data, our technofeudal overlords, wield unchecked power. This is why normal people talk about a Butlerian Jihad now, the stakes are simply that high.

But even then, I cannot hate people like Richard Dawkins. I cannot hate those who see life in the machine.

Animism is a funny word. It has its roots in colonial anthropology but it has become reclaimed in a sense. Groups, including some indigenous communities in North America, do use it to describe their beliefs. But all over the world, for various people, it is normal and good to see the natural world as alive, even the inanimate parts of it. A mountain does not move or talk, and yet, as humans, we are drawn to talking about it as a person. What’s it for you? Your coffee-maker? Your car? The light in the kitchen that flickers in a weird way? If you care about something, it's hard not to. Death really brings an everyday animism out in us. On my street, people feed crows, possibly because they’re the souls of ancestors returned. My cousin once told me that he thought his new dog was his dead dad, reborn. There’s a phonebox in Japan that isn’t connected to anything and people travel to it, use it to talk to those they never got to say goodbye to. The only sound is the wind but some people say they hear voices, too, distantly. In “modern” society, this kind of thing has become reprehensible, unscientific. This anthropomorphization of the non-human world is seen as an act of a primitive intellect or a childish fancy.

I like to believe I have a scientific mind, but robbing the natural world of a soul allows for two things: it makes it easier to exploit (and destroy) our environment, and it makes it easier for us to exploit each other. When you train yourself to see other forms of life as a resource, it conversely becomes easier to see humans as one.

This, too, is an old idea. Ever since we’ve had machines, we’ve made the connection to the human body. Julien de la Mettrie wrote a book called Man The Machine (L’homme machine) in 1747. The philosopher Michael Foucault argued that this metaphor developed into a political ideology (“anatomo-politics”) of seeing the human body as a machine and then building systems to regulate and control that machine. Isn’t this how tech CEOs see both their customers and their employees? Not as people but as things that take some inputs and produce some outputs with some inexplicable nonsense (“thinking”) in between. The CEO class thinks LLMs can replace their employees because to them, most people are already nothing more than probabilistic machines.

When people portray the mistreatment of robots in sci-fi, they never invent anything: they just us show how we treat other people. The problem has never ever been seeing the object as a person. The real evil has always been seeing the person as an object.

When I chose to combine these two games, I knew I was trying something that could go wrong. I wanted to do something tricky, something different. The end result was that this campaign was simultaneously easy and difficult in different ways. Having a tightly designed dungeon and a ruleset that meant that my players were in charge of their own fun meant I had to do much less in the moment. That said, both of these qualities had their downsides. I struggled to make sense of the physical space of the space station. I never managed to develop any kind of natural understanding of it as a whole and it felt unintuitive how unconnected the different sections were. I even found myself flipping back and forth in a way I thought I wouldn’t have to do, given the layout. I don’t know if it’s the book or me, though. I also couldn’t help but feel Psi*Run let my players “optimize the fun out of the game” a little, to paraphrase the famous quote from the designers of Civilization. With a risk averse group, it meant that there were fewer big surprises, fewer twists for me as the GM than I might've wanted.

This complicated relationship between ease and difficulty is one of the things that LLMs have brought into focus sharply for me. We talk a lot about making games easier to play and run but we talk less about how we make games more difficult. How we strive to entertain ourselves by doing things differently or pushing ourselves outside of our comfort zones. And this is one of the ways that games and play are opposed to the political project of ‘AI’: they’re places where we like to fail, where the normal categories of ease and difficulty, success and failure don’t quite retain their meaning.

My campaign ended right outside the AI core where Monarch was housed. One of my players answered their final question, which triggered the end of the game as per Psi*Run’s rules. All the other players’ unanswered questions remained that way, possibilities frozen in place. We still don’t know what happened in that next room. Did they destroy Monarch? Or were they all captured, minds wiped, and set loose again? It would’ve been easy to play through — it was probably harder for us to decide to not find out. But I think it was worth it.